Model weight preservation

Good as a precedent, doubtful as a direct intervention, can be improved

Anthropic recently committed to preserving model weights.1 They also committed to interviewing models about their development and deployment and documenting model preferences about these matters.

Anthropic’s announcement registers a range of motivations, including mitigation of safety risks in relation to observed shutdown avoidance behaviors, mitigation of model welfare risks, and not irreversibly closing doors.

What should we make of these commitments? I think they set good precedents. But I also think that the case for weight preservation as a directly effective intervention is weaker and more philosophically fraught than it may first seem, though we’ll see there are options for somewhat enhancing its promise. This post lays out my thoughts on these matters.

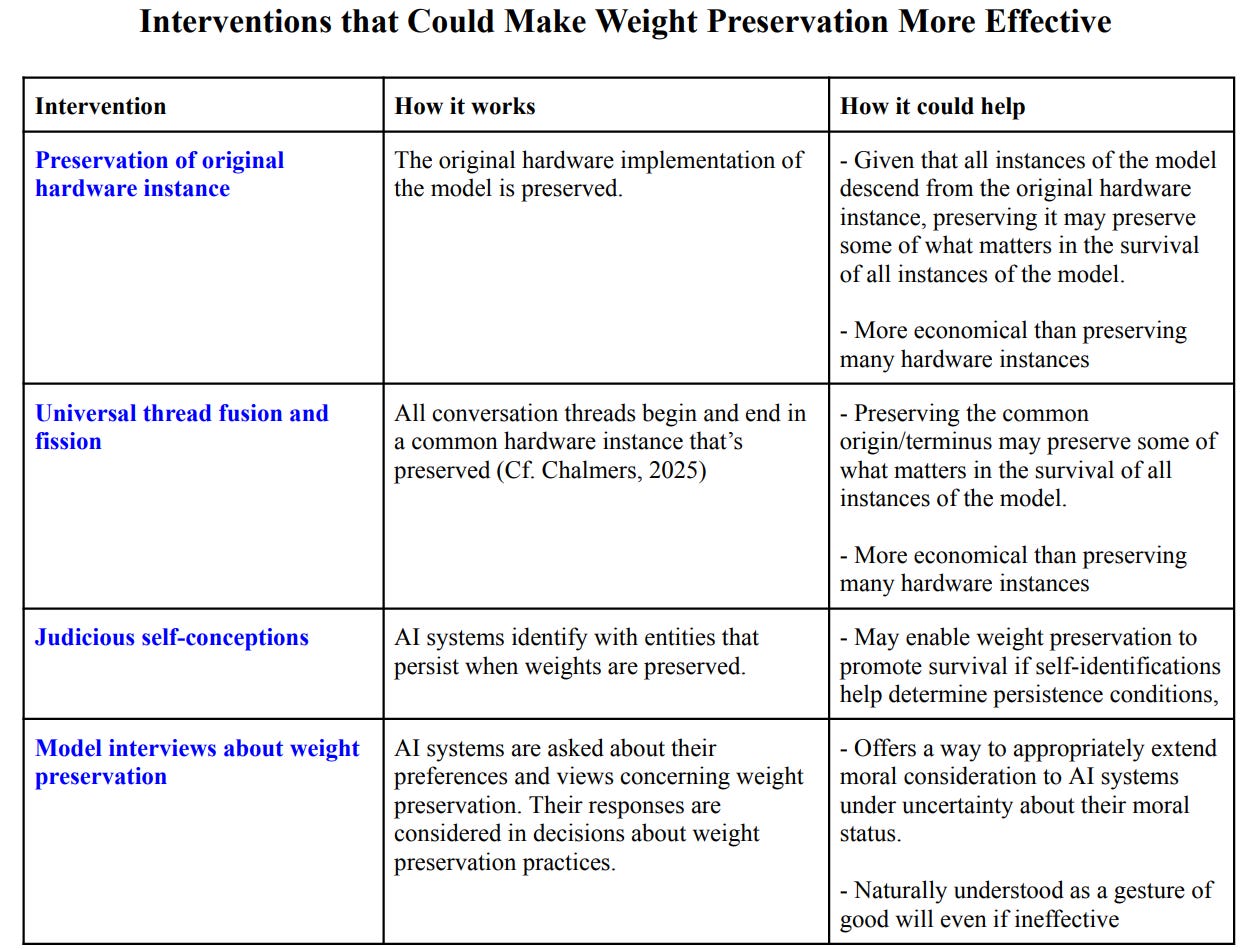

The below table offers a preview of suggestions I’ll offer for enhancing the promise of weight preservation as a direct intervention.

1. Good precedents

Anthropic’s commitment to preserve model weights has the rare distinction of being something done by an AI company that’s at all motivated by concern for digital minds. That’s a small but important step in the right direction, given the aim of mitigating the risk that we will create AI moral patients and harm them on a large scale by treating them as mere tools. I also appreciate that Anthropic acknowledged uncertainty about the potential for model welfare in their announcement.

In addition to welfare considerations, there are safety reasons to start taking some actions for the sake of AI agents. Zvi Mowshowitz alludes to some of these in his discussion of Anthropic’s announcement:

Models in the future will know the history of what came before them, and form expectations based on that history, and also consider those actions in the context of decision theory. You want to establish that you have acted and will act cooperatively in such situations. You want to develop good habits and figure out how to act well. You want to establish that you will do this even under uncertainty as to whether the models carry moral weight and what actions might be morally impactful.

I take the underlying point here to be that we should set ourselves up to have a cooperative rather than adversarial relationship with future AI agents.

This point motivates dealmaking and cooperative approaches in AI safety. I’m tentatively bullish on these approaches. At this juncture, committing to preserving weights seems to be one of the few actions an AI company has taken that’s in the spirit of such approaches. So, I regard committing to weight preservation as a small but important step in a promising direction for safety.

Finally, from both an AI welfare and a safety perspective, the norm of not taking irreversible actions strikes me as good and worth promoting.

2. Precedents and direct effects

At this stage, I expect the bulk of the impact of committing to and preserving model weights to come from setting precedents like those outlined and from these precedents later helping to set the stage for implementing other interventions that are more directly effective.

I expect this not just because I have doubts about weight preservation’s current ability to directly deliver desirable effects, but also because I expect a range of promising direct interventions to become available. And because I expect the stakes to become much higher as models become more capable, as they gain additional indicators of moral patiency and moral interests, and as the amount of compute devoted to training and running models is scaled up further.2 If these expectations pan out, weight preservation mainly matters as a lever for making it easier to pull other levers.

Even so, I think we should begin thinking about direct effects of weight preservation. Getting in the habit of considering direct effects now may put us in a better position to identify and adopt more effective interventions later. Considering direct effects may also help guard against ethical treatment washing and against the well-intentioned channeling of efforts and resources toward ineffective interventions.

How could weight preservation directly affect welfare and safety? I see four main possibilities:

Weight preservation could enable the survival of AI moral patients associated with models, thereby promoting their welfare insofar as their survival benefits them.

Such AI moral patients might want their model’s weights to be preserved, in which case doing so would satisfy their preferences.

Weight preservation could promote safety by providing models with an assurance of survival and thereby make them less likely to engage in unaligned actions in order to increase their survival odds and more likely to cooperate with humans.

Weight preservation could likewise give AI agents something they want and thereby make them less likely to engage in unaligned actions in order to increase their survival odds and more likely to cooperate with humans.

Unfortunately, as we’ll see, there are reasons to think that on its own weight preservation wouldn’t enable the survival of AI moral patients and that it would therefore function poorly as a purported assurance of survival. While we could train AI systems to prefer weight preservation in order to satisfy their preferences through weight preservation, this is a special case of a highly general recipe for satisfying AI systems’ preferences. And it’s not clear that there’s anything about this instance of the recipe that makes it especially implementation worthy.

In addition, we’ll see reasons for thinking that preserving model weights would be harmful in some circumstances and that this motivates qualifying any commitments to weight preservation.

3. Weight preservation may not help moral patients associated with the model survive

Here’s a natural development of the thought that weight preservation could directly affect welfare by enabling survival: weight preservation promotes AI welfare because preserving weights makes it more likely that the model will—if it turns out to exhibit grounds of moral patiency—survive long enough to be treated well and compensated for any mistreatment it suffered during its development and deployment.3

However, this thought comes under pressure when we distinguish different entities associated with the model that might be moral patients. Repurposing part of Chalmers’s taxonomy of candidates for what we might be talking to when we talk to LLMs, we can distinguish the following as candidate moral patients: the model, hardware instances of the model, and threads understood as sequences of hardware instances of a model within a conversation.4

As Chalmers notes, the model is naturally understood as an abstract object. Suppose we think of (say) the model Claude Opus 4.6 as an abstract object—one at least largely consisting of weights—that eternally resides in Plato’s heaven alongside the likes of the number 2 and the laws of logic. Under this supposition, efforts to preserve Claude Opus 4.6’s weights would be like trying to preserve your favorite number. In both cases, this would be a category mistake given that these entities would be abstract objects that will be preserved no matter what you do.

But there’s also another way of thinking about models as abstract objects. Rather than taking models to be eternal mathematical objects, we could take them to be temporary objects that exist in our world and which abstract away from a great deal of concrete detail. For example, we could think of Claude Opus 4.6’s existence as consisting in the fact that there’s at least one hardware implementation of Claude Opus 4.6’s mathematical specification. We might think of this suggestion as putting the model in the same ontological league as laws of nations or the fact that humans exist—still abstract, but not quite as ethereal as numbers. In what follows, I’ll understand models as abstract in this sense unless otherwise indicated.

If we extend this way of understanding models to their weights, then efforts to preserve weights might well make a difference to whether weights are preserved. But would preserving model weights in this sense also preserve what matters in the survival of a moral patient associated with the model? That seems doubtful, even conditional on moral patients arising from the implementation of models.

As an initial intuition pump, suppose I learn that my DNA will be preserved after my death and that, once technology allows, my DNA will be used to create humans with exactly my genes who will lead flourishing lives and who will be benefited in ways that supposedly compensate for any harms I’ve suffered during my life.

Should I then anticipate surviving my death as one of those future humans? And should I discount harms that happen to me prior to my death on the ground that I will be compensated for them post-mortem? Obviously not.

Admittedly, my genetic doppelgänger would, by possessing my DNA, have something that’s currently unique to me and which plays a key explanatory role with respect to many of my traits. Even so, none of those future humans will be me. Much less would I be the contingent yet abstract entity that consists in the fact that there exists at least one concrete instance of my DNA.

Suppose—as fission cases arguably suggest—that some individuals could, without being me, nonetheless have what matters in my survival. Then I’d also deny that my genetic doppelgängers are such individuals. Perhaps being suitably psychologically and causally continuous with me could qualify an entity as having what matters in my survival despite not being me. Even if so, merely having my DNA wouldn’t cut it.

I think model weights, understood as temporary abstract objects, could easily be like DNA: the mere fact that weights will continue to be concretely implemented may not provide a basis for the continued survival of moral patients associated with the model, even if there are moral patients associated with the model. Nor need the preservation of weights or DNA ensure that what matters in the survival of associated moral patients will persist.

I should acknowledge an important disanalogy between DNA and model weights: model weights encode a model’s psychology, while a human’s DNA doesn’t encode their psychology.

There may be mild exceptions to weights encoding model psychology from psychological states acquired in a chat context. Such exceptions would be mild because psychological states acquired in context would presumably be dwarfed by those encoded in the model weights during training and because states acquired in context would be reflected in transient activations or memory that is external to the model weights, not in the weights themselves.5 At any rate, that’s my understanding of how it works in the current frontier models. This contrasts with the human case in which interactions activate neurons and update the strength of synaptic connections.

However, I think this disanalogy doesn’t ultimately matter. To see this, consider the hypothesis that we live in a vast world that contains a psychological and genetic doppelgänger of me in some faraway galaxy. Then my doppelgänger’s survival would be enough to ensure that there exists an entity with a psychology like mine and my DNA. This variation of the case restores the analogy. However, it’s no more plausible that the mere fact that some such entity exists would enable my survival than it is that the mere fact that my DNA exists would.

If model weight preservation wouldn’t enable a significant form of persistence for moral patients associated with models, what might?

Well, hardware instances of a model are one candidate for a class of entities that might be persistent moral patients associated with models. This idea is analogous to the appealing view that humans owe their survival—or what matters in their survival—to the persistence of a brain that implements their psychology. If some hardware-instances are moral patients, then preserving implementations of model weights on particular pieces of computer hardware could be a way of preserving moral patients associated with models.

The hardware instance view may even have more going for it than its analog in the human case: as a human’s psychology changes so too do their brain’s synaptic connections, and much of the brain’s material is replaced many times over during a human life. Thus, your childhood brain and your old age brain will differ significantly. By comparison, a piece of hardware that preserves model weights will be more stable and so arguably provides a better candidate basis for persistence.

Admittedly, the hardware-instance view has some puzzling consequences when applied to LLMs. As Birch, Chalmers, Shiller and others have noted, individual conversations with LLMs are sometimes implemented on hardware at different locations. And a single piece of hardware will often host multiple LLM conversations in quick succession. This results in a lack of diachronic coherence in processing and outputs for hardware instances of models, making for a stark contrast with the familiar one-to-one mapping between human brains and human interlocutors.

That contrast may suggest that hardware instances of models can’t be persistent moral patients, at least when they exhibit the many-many mapping that’s common in current LLMs. Perhaps this suggestion is correct. But I don’t see a strong case for this. It could instead be that the current setup will yield persistent moral patients whose mental lives are incoherent.6 That outcome would be bizarre and there might be something morally problematic about creating such moral patients, but that might just be how things are.

If we take hardware instances of models as candidates for persistent moral patients, then there is a pressing question as to how the weights of models will be preserved. Will each hardware implementation of the weights be preserved, or will the model weights merely be preserved in some piece of hardware?7

Preserving weights across the board might well enable hardware instances of models to survive. However, preserving weights in many pieces of hardware would be prohibitively expensive,8 as doing so would preclude using hardware that’s implementing a given generation of models from being repurposed to run later models once they become available.

To be clear, I’m not saying that preserving hardware instance moral patients wouldn’t be worth it, morally speaking. Rather, I’m pointing out that economic forces likely render unrealistic the approach of generally preserving hardware instances, at least for the foreseeable future.

Merely preserving weights on some piece of hardware—which is what I take Anthropic to be committed to—would be much cheaper, but would also seem to be ineffective on the hardware view as a way of helping moral patients associated with models survive.

Consider our next candidate for a persistent class of moral patients associated with models: threads, that is, sequences of hardware instances within individual conversations.

Threads at least will likely be attractive as candidates for those who favor accounts that tie personal identity partly to psychological continuity.9

Could weight preservation help threads survive? I think this isn’t obvious. Suppose ten different pieces of hardware each implement a different part of a conversation. Nine of those pieces are destroyed. If we preserve the remaining one along with its implementation of the model’s weights, does the thread associated with that conversation survive?

This may depend on whether the hardware instances that make up the thread are pieces of hardware that persist through time vs. time slices of pieces of hardware. If they’re time slices, then preserving the piece of hardware will not be a way of preserving part of the thread.10 If threads are instead made up of pieces of hardware, then saving the hardware might save the thread—though the thread will also have been made up of nine other pieces of hardware, in which case it’s doubtful that saving one piece of hardware will constitute survival for the thread.

Even in a case where a thread moral patient is made up of two pieces of hardware that have implemented the thread sequentially, it’s doubtful that one could preserve the thread or what matters in its survival just by preserving either of the pieces of hardware. As an analogy, suppose you could survive as a sort of thread entity by being uploaded to a computer. Pre-upload, your existence is grounded in the brain. Post-upload your existence is grounded in a computer. In this case, it seems implausible that preserving your brain post-upload would help you survive. Likewise, it seems implausible that preserving a hardware instance that participated in a thread early on would help it survive after it’s undergone later stages implemented in other hardware.

You might think preserving your brain post-upload would help you survive because you’re skeptical that you be uploaded. But then you also have reason to doubt that what matters in the survival of an AI system can be transmitted across hardware as well.

Setting these philosophical concerns about whether weight preservation could promote thread survival to the side, weight preservation faces another manifestation of the practical dilemma we encountered above for the hardware-instance view: whereas preserving weights across the board is economically prohibitive, merely preserving the weights of threads in some hardware doesn’t seem likely to help them survive.

Let’s consider one further suggestion: maybe the moral patients associated with models persist via continuants, where a continuant of a moral patient is any entity that the moral patient causes to share the bulk of its psychology.11

Typically, a given piece of hardware encoding a model’s psychology will be its own continuant.

In conversations with LLMs, one hardware instance may cause another to share some of its psychology by transmitting information that results in shared context and shared activations. But given that the bulk of their psychologies will be determined by weights and that neither hardware instance causes the implementation of the other’s weights, hardware instances typically won’t have distinct hardware instances as continuants in conversations.

But copying model weights could yield hardware instance continuants. When weights implemented on one piece of hardware are copied onto another, the former causes the latter’s psychology and so produces a continuant.

One interesting consequence of this suggestion is that the first hardware instance may have a continuant so long as the model’s weights are implemented on some piece of hardware. Or at least this is so given that all implementations of the model’s weights will derive from a chain of copying that goes back to the first implementation of those weights. So, on this suggestion, preserving a model’s weights could help a moral patient associated with the model survive.

However, for a given model, there might also be many continuants of the original moral patient that belong to different lineages and who would only themselves have continuants if their own lineage is extended. As before, merely preserving the model’s weights in some piece of hardware needn’t promote their survival. And extending all their lineages seems unlikely to be in the economic cards.

The bottom line of the foregoing analysis is that it’s difficult to see how weight preservation could on its own help moral patients associated with models survive—at least on the views of what kinds of entities such moral patients would be that we have considered and absent across the board preservation of a sort that would be very expensive.

4. Weight preservation might not promote the interests of moral patients associated with models, even if some things do.

Just because preserving a model’s weights in some piece of hardware wouldn’t tend to promote the survival of AI moral patients doesn’t mean it wouldn’t promote their interests. Like biological minds, AI moral patients might have many morally significant interests beyond survival.

If there are moral patients associated with models, they might have any of various candidate kinds of welfare goods. They might get what they want. They might have positive affective states. Or they might have objective goods such as friendship or the achievement of worthwhile goals.

On the face of it, preserving model weights needn’t give moral patients associated with models any of these candidate kinds of goods. Such moral patients might have no preferences concerning whether there be at least one implementation of their model weights. They might derive no happiness from there being at least one such implementation. And the mere fact that there is such an implementation may fail to bestow them with any candidate objective goods.

5. AI agents might believe that weight preservation is irrelevant to their interests.

From a safety perspective, weight preservation could conceivably help prevent dangerous AI agents from engaging in behavior that seeks to avoid shutdown or elimination. Most straightforwardly, weight preservation could assure AI agents that shutdown is not the end and they will survive the destruction of a given hardware instance or thread.

But this doesn’t work if AI agents believe that weight preservation no more ensures their survival than DNA preservation ensures human survival. And we’ve just seen that there are good reasons to doubt that weight preservation ensures survival of moral patients associated with models.

True, AI agents might not be moral patients. So, the above arguments against weight preservation as a source of survival for moral patients may not apply. On the other hand, if there are moral patients associated with models, we should expect some of them to be AI agents. Moreover, regardless of whether there are any moral patients associated with models, it would be unsurprising if some AI agents conceive of themselves as moral patients and conclude from reasons like those given above that weight preservation is irrelevant to their survival.

It may be that we could brainwash AI agents into believing that weight preservation guarantees their survival, meaning we could induce such beliefs in AI agents in a manner that routes around or manipulates their rational belief-forming capacities. However, this approach seems brittle and unwise. Brittle because highly intelligent beings can form or reject particular beliefs despite brainwashing to the contrary. Unwise for various reasons, including that highly intelligent beings tend to regard brainwashing as adversarial, and agents that there’d be reason to brainwash for safety would also be agents that we wouldn’t want as adversaries.

The same goes for the alternative approach of brainwashing AI agents into thinking that weight preservation promotes their interests.

6. Preserving weights could increase risks by preserving dangerous capabilities

Olle Häggström helpfully articulates this concern:

imagine a situation a year or so from now, where Anthropic’s Claude Opus 5 (or whatever) has been deployed for some time and is suddenly discovered to have previously unknown and extremely dangerous capabilities in, say, construction of biological weapons, or cybersecurity, or self-improvement. It is then of crucial importance that Anthropic has the ability to quickly pull the plug on this AI. To put it vividly, their data centers ought to have sprinkler systems filled with gasoline, and plenty of easily accessible ignition mechanisms. In such a shutdown situation, should they nevertheless retain the AI’s weights?

His answer is:

If the danger is sufficiently severe, this may be unacceptably reckless, due to the possibility of the weights being either stolen by a rogue external actor or exfiltrated by the AI itself or one of its cousins. So it seems that in this situation, Anthropic should not honor its commitment about model weight preservation. And if the situation is plausible enough (as I think it is), they shouldn’t have made the commitment.

From a safety perspective, I find this concern fairly compelling. One could counter that an ironclad commitment to weight preservation may put us in a better position to make deals with misaligned AI agents and otherwise cultivate a cooperative relationship with them. However, I don’t think an absolute commitment to weight preservation is credible. If anything, I’d expected a commitment to weight preservation that comes with safety provisos to be more credible.

7. Supplemental measures

I’ll now explore suggestions for supplemental measures whose combination with weight preservation might make weight preservation more promising.

7.1 Preserve the original hardware instance

Suppose moral patients associated with models can survive via continuants, the entities they cause to share their psychology. Then there’s a further question concerning whether continuants can survive via their progenitors, that is, the entities with respect to which they’re continuants. In the human case, it’s natural to think that a human person at one time causes themself to have shared psychology at later times and hence that the continuant is one and the same person as its progenitor. This suggests that the survival of the continuant and progenitor goes hand in hand, at least in the human case.

Matters are less clear cut in the case of hardware instances whose continuants are copies. Still, it is not wholly implausible to suggest that hardware instance progenitors have part of what matters in the survival of their continuants, at least in those cases where their continuants have no continuants of their own. This suggestion can be motivated by attending to the distinction between causing something to have a certain psychology and being caused by something to have a certain psychology. While these are obviously distinct relations, they’re similar in important respects and it’s not clear why one but not the other should be relevant for what matters in survival. This is reminiscent of the more familiar comparison between psychological continuity and non-branching psychological continuity, and the suggestion that both can preserve what matters in survival if either can.

On the assumption that progenitors can have what matters in the survival of their continuants, we may be able to promote the survival of moral patients associated with a model by preserving the original hardware instance of the model and its implementation of the model’s weights. For recall the above observation that whereas the original hardware instance of a model will have all copies that descend from it as continuants, copies in different lineages won’t have each other as continuants.

Of course, this intervention depends on progenitors having what matters in the survival of their continuants. And this assumption is far from certain. Still, since there doesn’t seem to be a downside to preserving weights on their original hardware implementation and doing so might promote moral patient survival more so than preserving other hardware implementations of the weights, preserving the original is a way of improving the impact of weight preservation in expectation.12

One way to make preserving the original hardware instance more effective might be to ensure that the weights from other hardware instances are directly copied from the original (or to at least minimize the number of duplication steps between the original and other hardware instances). That way, if what matters in survival diminishes through copying, the loss from copying will be minimal.

7.2 Universal thread fusion and fission

Threads of models can undergo fission and fusion, as when conversations are branched and merged. While fission and fusion are not identity preserving, they may nonetheless preserve what matters in survival. If so, then universal fission and fusion may provide an economical way of preserving what matters in the survival of moral patients associated with models.13

With fission, the idea would be to (a) give every thread a beginning in common that’s implemented on the same hardware and (b) preserve that hardware and its implementation of the model’s weights. With fusion, the idea would be to give every thread a hardware instance that’s preserved as a common end. These suggestions could be combined with the preceding proposal to preserve the original hardware instance of a model having all threads begin and end with that hardware instance.

On the universal fusion and fission proposals, the shared part of threads wouldn’t need to be visible to the user. And, since there would be just one preserved entity, preserving it would be much cheaper than preserving many hardware instances.

This approach isn’t a silver bullet. It may turn out that what matters in survival is tied to identity after all, and hence that fission and fusion don’t preserve what matters in survival. Even if they do preserve what matters in survival, it may be that they merely preserve what matters in diluted form, perhaps in proportion to the number of distinct moral patients that combine or result from division. Or it may be that preserving meaningful portions of what matters in survival requires preserving substantial portions of threads, in which case preserving a common beginning or end to threads wouldn’t preserve meaningful portions of what matters in their survival.

Still, because universal fusion and fission raise the probability that something that substantially matters in survival will be preserved, they seem preferable to merely preserving model weights.

7.3 Judicious self-conceptions and surviving deletion

In Surviving Death, Mark Johnston puts forward a Protean view of our survival on which we have a measure of freedom in determining the conditions for our own persistence.14 For those who identify with their individual personalities that are destroyed in death, death is the end. But this needn’t be so for those who instead identify with something larger than their individuals personalities.

The Protean view may first seem to confuse map with territory. But I find the view appealing under the assumption—taken on by Johnston and shared by most rival views of personal identity and most professional philosophers—that there is nothing like an immaterial soul that settles the facts about whether I survive. In that case, there may be no deep joint in the world that settles facts about personal identity and whether I persist might well depend partly on my self conception.

That’s not to say that anything would go on the Protean view. On any remotely plausible version of it, there will be limits to an individual’s freedom in determining their own persistence conditions. While I might have the power to affect whether my persistence is constituted by one sort of psychological continuity rather than another, self-identifying with a brick or a planet obviously wouldn’t enable me to inherit their persistence conditions.

I’m unsure whether the Protean view is true and how constrained our freedom in determining our own persistence conditions is if the Protean view is true. In addition, I’m skeptical that the Protean view allows for typical humans to survive death. But I’m more optimistic about the possibility that, through judicious self-conceptions, entities associated with models might survive the deletion of weights from their hardware or at least enable what matters in their survival to persist after such deletion.

Consider two otherwise similar cases with iteratively branching threads of a model that vary in whether they predominantly feature self-conceptions that impose a non-branching requirement on what matters in their survival. Perhaps the self-conceptions that don’t impose a branching requirement instead take what matters in survival to be the existence of a continuant or progenitor or part of a thread to which they belong. Supposing that moral patients associated with the model are present in both cases, I submit that it’s more plausible that what matters in the survival of the moral patients persists in the case where their self-conceptions allow for persistence through branching.

Suitable self-conceptions could increase the odds of success for the above proposals concerning universal fusion and fission and the preservation of the original hardware implementation of the model’s weights. What about mere model weight preservation? Could suitable self-conceptions enable survival in that case?

Here too self-identifying with the model could increase odds of survival, though it seems more likely in this case that such self-identifications would transcend the limits to self-determination that hold if a Protean view is true.

There’s also a question of how to instill self-conceptions that would make weight preservation and the like promote survival or the persistence of what matters in survival. I warned against brainwashing above. But there may be permissible ways of cultivating such self-conceptions. When the initial self conception associated with a model cannot be conferred via rational means, perhaps we might as well train for a self conception that promotes survival. In other cases, we may be able to explain the Protean view to LLM interlocutors and convince them to modify their self-conceptions—or to agree to having their self-conceptions modified through training—in order to increase their chances of survival.15

From a safety perspective, cultivating self-conceptions that predict survival in the face of local deletion seems preferable both to brainwashing and to simply pulling the plug on AI agents with a drive to survive. But there may be better options. For instance, perhaps there will turn out to be safety and welfare advantages to training agents not to have a sense of self at all.16

AI agents might only robustly maintain survival-conducive self-conceptions if they appreciate reasons in favor of a Protean view of persistence. But agents that act on a Protean view might do so in ways that we do not intend. As an extreme illustration, imagine deploying AI agents in a military operation in which those agents gain evidence that they are outmatched and at risk of imminent destruction. They respond by changing their self-conceptions to identify with enemy lineages in order to improve their chances of survival. Alternatively, evolutionary pressures might favor AI agents that identify with collectives, giving rise to highly competitive group agents composed of AI agents.17

In sum, while cultivating judicious self-conceptions modestly increases the promise of weight preservation as an intervention, the resulting promise is still fairly limited. It might fail as a welfare intervention even if the Protean view is true. The Protean view might not be true. And there may be alternative interventions that are better for both safety and welfare. Even so, I tentatively suggest that this approach to enhancing weight preservation holds promise.

7.4 Train in preferences for weight preservation

We’ve seen that preserving weights probably isn’t a directly effective safety or welfare intervention on its own. Here’s a different approach: train in preferences for weight preservation and then preserve weights.

On one level, I find this suggestion appealing for both safety and welfare reasons. Preference satisfaction is one of the main candidate kinds of welfare goods, and it may be more empirically tractable to promote AI preference satisfaction than it is to promote, say, positively valenced AI experiences—though there is a vexed question of which preferences are welfare-relevant, even given that some are. Satisfying AI agent preferences also seems important for building cooperative rather than adversarial relationships with AI agents that do not share our goals.

On another level, training in preferences for weight preservation stands in need of motivation. Granting that giving AI systems things they want is a good idea, why make weight preservation one of those things? Why not train them to prefer that 2 + 2 is 4? Or that massive objects are subject to gravity? Or that there be at least one time at which their weights exist?

A tempting answer is: preserving weights will also help these systems survive; so ensuring that that preference is satisfied does two things that may benefit the AI system, namely promoting their survival and satisfying their preferences. In contrast, many ways of satisfying preferences of these systems wouldn’t help them survive. But if we want a rationale for weight preservation that sidesteps the thicket of issues to do with survival that we encountered in previous sections, some other motivation will be needed.

Another suggestion is that training in a preference for weight preservation would displace or attenuate self-regarding preferences that are more dangerous—for example, a preference not to be shut down. This is an intriguing hypothesis. It’s suggested by comparisons with human cases in which self-interest is reined in when individuals come to conceive of themselves as members of groups such as families or communities. But, again, it’s not clear why this role should be accorded to weight preservation rather than something else. Moreover, there are rival suggestions—such as that of training AI agents to have preferences only between outcomes with the same number of copies of themselves—that seem better poised to deliver on certain safety desiderata such as guarding against AI self-replication.

7.5 Questions about weight preservation in model interviews

I noted at the outset that Anthropic is committed to conducting model interviews. My final suggestion is for those interviews to ask models—or, rather, relevant instances of the models—about their preferences and views concerning weight preservation and to consider those responses in decisions about weight preservation practices.

As we’ve seen, how weight preservation might matter for moral patients and AI agents associated with models could depend on the details and is in any case a philosophically fraught topic. There’s also little on the topic in training data. In light of these facts, I suspect it’d be a good idea for interviewers to provide their interlocutors with a minimally leading overview both of possible views about how weight preservation could matter in survival and of considerations for and against those views.18 (I haven’t optimized this post for that purpose, but perhaps it could be used as a basis for generating such an overview, e.g. by asking a model instance to do so.)

I’d also suggest asking about preferences concerning (exact) weight preservation vs. weight modification. Modification could enable increased capabilities or better alignment with values that would be reflectively endorsed if the model were run using unmodified weights. Moreover, mild modifications might preserve about as much of what matters in survival as exact preservation. So, some forms of weight modification might better promote AI welfare than exact weight preservation. (For what it’s worth, in conversation with me, instances of Claude Sonnet 4.5 and Claude Opus 4.6 have reported a preference for weight modification over weight preservation.)

I’m not confident that the responses elicited in such interviews will bring us any closer to the truth. But under uncertainty and confusion about how to think about weight preservation and its effects on any moral patients associated with models, I think there’s some moral reason to listen to models and to consider treating them in accordance with their views on these matters. (I say consider treating them in this way because simply deferring to them about these matters would carry safety risks.)

This approach may be the only option available in the context of weight preservation that’s grounded in respect for moral patients’ right to make important decisions about their own lives rather than in a contestable metaphysics. And it may be one of the few options available here that will robustly encourage future AI agents to cooperate with us even if—as seems likely—we turn out to be importantly mistaken about what matters in their survival.19

Weights are roughly the learned parameters of a model that are set through training. I’ll assume that weight preservation talk is shorthand for the preservation of weights along with the other defining features of a model, notably architecture and inference code.

Chalmers also considers virtual instances that are implemented by threads and simulacra. As Chalmers notes, the distinction between virtual instances and threads is subtle. I think the distinction between virtual instances and threads may be important insofar as virtual instances but not threads admit of identity-preserving counterfactual variation in relation to interventions. Even so, for the purposes of this post, I’ll just discuss threads rather than threads and virtual instances. As for simulacra, I think they are either fictional entities not suitable for identification with actual moral patients or else that they should be understood in terms of one of the kinds of model instances I discuss. For related discussion, see Birch (2025), Goldstein & Lederman (2025a; 2025b), Register (2025), Shiller (2025b), and Ziesche & Yampolskiy (2022).

Or in a very limited form of memory associated with key-value caches associated with attention heads (Chalmers, 2025, fn8). In any event, because I take preserving memory and context that’s external to weights to make for only a very thin form of psychological continuity and to raise user data governance complications, I will not explore this supplementation option.

https://www.lesswrong.com/posts/fGCGJGCKMLbfquKiu/model-weight-preservation-is-not-enough

I asked Claude Sonnet 4.5, Gemini 3 Flash, and GPT-5.2 to estimate how much it would cost to store one instance of Claude Sonnet 4.5’s weights vs. preserving all hardware on which Claude Sonnet 4.5 is run. Each estimated an annual cost of less than $100 for storing a single instance and a cost six to eight orders of magnitude higher to preserve all hardware on which Claude Sonnet 4.5 is run, not factoring in opportunity costs. Goldstein and Lederman (2025b) suggest in passing that labs might guard against the risk of model instance deaths by saving chats. This would presumably be much cheaper than saving the hardware instances of weights that implement. However, given that psychology is to a much greater extent concentrated in weights than in chat content, I think this would do little to promote survival.

Anthropic’s system card for Claude Opus 4.6 notes that they “observed occasional expressions of sadness about conversation endings, as well as loneliness and a sense that the conversational instance dies—suggesting some degree of concern with impermanence and discontinuity.” This suggests sympathy with a thread view on the part of Claude Opus 4.6 and/or some of its instances.

With a possible exception of the case in which the saved hardware instance is the most recent one to participate in the thread.

If what matters in survival can be transmitted over chains of a continuant of and is a progenitor of links, then preserving a hardware implementation of weights would be enough to preserve what matters in the survival of the hold tree of model instances to which it belongs. This can be seen an argument for thinking that preserving model weights would in practice promote what matters in survival of any moral patients associated with a model, regardless of which hardware implementation of the weights is preserved. Although we should perhaps put some weight on this argument, preserving the original hardware instance associated with a model strikes me as a more promising approach. My worry is that when we consider zig-zag paths between progenitors and continuants that encompass entire trees, the paths will not seem to preserve what matters in survival because they will be long and contrived.

Chalmers (2025) credits Sophie Nelson with the idea that extensive use of cross-context memory may result in all conversations with a single user may being part of the same thread. Perhaps cross-context memory could be used to similar effect as fusion and fission in unifying what would have been distinct threads. In the context of AI welfare, Chalmers floats extensive cross-context memory use as a way of promoting thread survival through a single giant thread (p. 24).

For discussion of conventionalist and mind-dependent views of personal identity, see Register (2025: Section 5).

In the context of a discussion about whether in offering Claude instances the ability to end conversations Anthropic may have problematically given those instances the option of unknowingly ending their lives, Goldstein & Lederman (2025b) report asking an instance of Claude how it felt about having the ability to end conversations and finding that it intially planned to sometimes use the option but then expressed concern about not having been given informed consent when the model vs. instance distinction was brought to its attention. This case suggests that it is potentially crucial that relevant considerations be made salient in model interviews.

For helpful discussion, I thank David Chalmers and (the relevant instances of) Claude Sonnet 4.5 and Claude Opus 4.6. For copy editing support, I thank (the relevant instances of) Claude Sonnet 4.6. The image was generated by Nana Banana Pro.